DHS is using facial recognition technology to help rescue victims of child exploitation

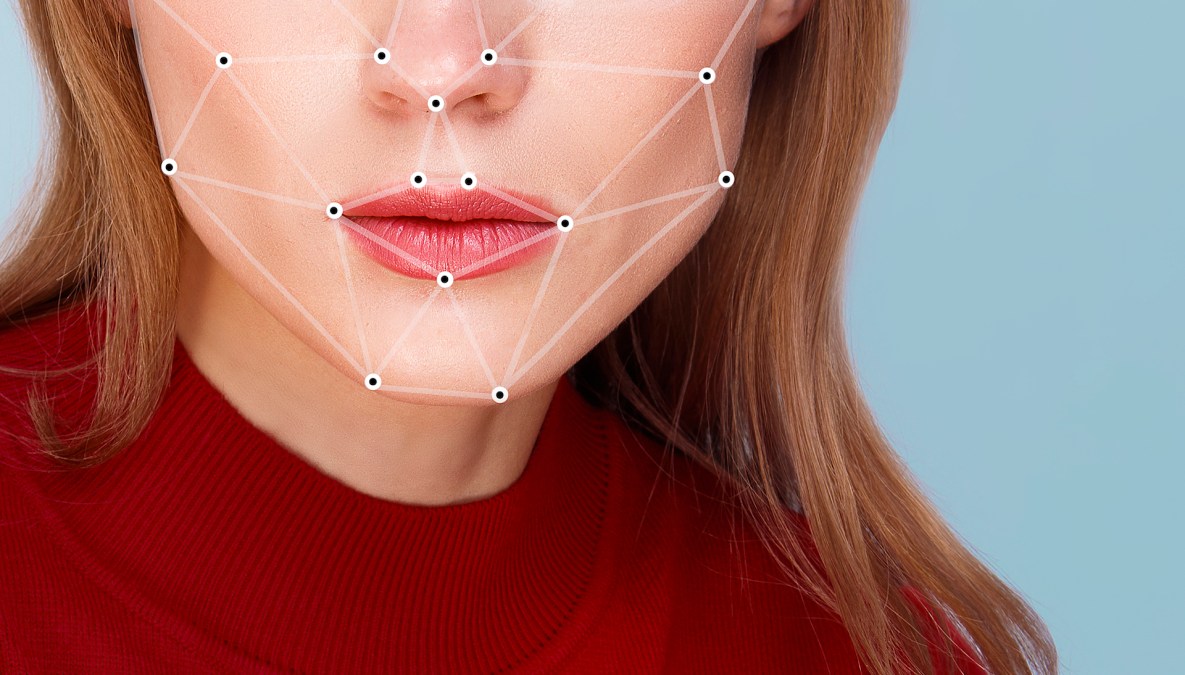

The Department of Homeland Security’s Science and Technology Directorate is developing and testing facial recognition algorithms to help scour through images of child exploitation found on the internet and the dark web.

The project is called Child Exploitation Image Analytics (CHEXIA), and it aims to aid investigators in dealing with the huge amounts of graphic and violent images of child abuse that they collect. Automatic facial recognition can help these investigators locate both the victims and the perpetrators more quickly, officials say.

“The data is staggering,” Science and Technology Directorate Program Manager Patricia Wolfhope said in a statement. “The number of reports received through the National Center for Missing and Exploited Children (NCMEC) cyber tip line has grown steadily each year, from 223,374 to 326,310 to 415,650 in 2010, 2011, and 2012, respectively. NCMEC’s analysis indicates the number of images being collected and traded by offenders worldwide continues to expand exponentially.”

S&T partnered with the Child Exploitation Investigations Unit at Homeland Security Investigations’ Cyber Crimes Center (C3) to explore how technology can help.

CHEXIA has two main parts — testing various facial recognition programs on datasets of seized images of child exploitation to see which works the best, and integrating this high-performing algorithm into existing free media-forensics tools that law enforcement agencies can use.

According to a press release from earlier this month, CHEXIA is continuing to test programs but “thus far, the Intelligence Advanced Research Projects Agency Janus algorithms are outperforming all other algorithms against this very difficult data set.” IARPA under the Office of the Director of National Intelligence.

DHS is aiming to complete the CHEXIA project by December 2018.

“S&T expects these technologies to help find investigative leads that would otherwise be missed due to the sheer bulk of data and human limitations,” the press release reads.

Used elsewhere, facial recognition algorithms often draw scrutiny for their invasion of personal privacy. However, this may be one area where society is willing to sacrifice some privacy in the interest of keeping more children safe.