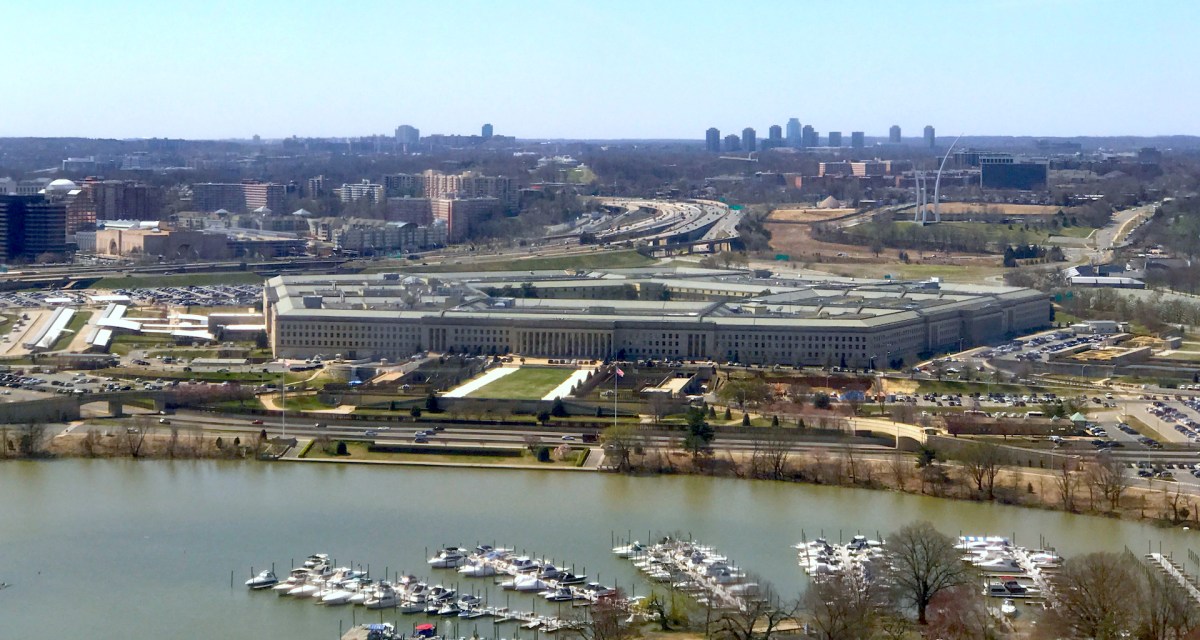

Pentagon actively working to combat adversarial AI

The Pentagon’s artificial intelligence shop is actively working on how to secure data and models from a newer threat: adversarial AI.

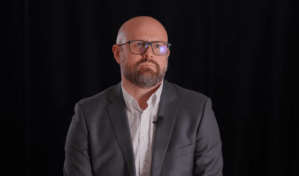

The Joint Artificial Intelligence Center is exploring ways data-sharing and model-sharing can create a manifest that helps combat cyberattacks attempting to impede or confuse algorithms into releasing sensitive information, CTO Nand Mulchandani said Thursday at an ACT-IAC event.

With the JAIC deploying and scaling 32 AI products — spanning areas like predictive maintenance operations, cybersecurity and warfighter health —across the Department of Defense, the data training these systems is its most valuable intellectual property and in need of securing, Mulchandani said.

“The trick is to figure out what tech/products are ready to deploy and in what ‘domain,'” he told FedScoop. “We think that AI explainability, AI security, AI ethics, and AI testing are all tightly connected and have very tight collaboration between the various groups that are tackling these areas.”

The JAIC now has an AI ethicist on staff assisting with policymaking, though Mulchandani stopped short of saying a strategy for dealing with adversarial AI is in the works.

DOD isn’t the only federal agency emphasizing protections against adversarial AI.

A big part of the Department of Energy’s AI strategy is public-private partnerships addressing adversarial AI by securing datasets and establishing data provenance. Like DOD, DOE sees ethical AI principles as being interrelated.

Cheryl Ingstad, director of DOE’s Artificial Intelligence & Technology Office, floated the possibility of a tool that examines AI algorithms for bias, which enforces ethical principles while also just being good cyber hygiene.

“Adversarial AI is an area where we need to do a lot of innovation around it and create new principles and new processes and methodologies to address it,” Ingstad said in October.